A new study from Harvard University found that students using an AI tutor learnt more than twice as much as those in one of Harvard’s best hands-on classrooms, in less time. Published in Scientific Reports in June 2025 (Kestin et al., 2025), the results caught my attention.

But before we get too excited, this study comes with important context. When I review research like this, I look for what actually translates to the children I study, not just the headline. This paper is promising, but the full picture is more nuanced than “AI beats teachers.”

What the study actually found

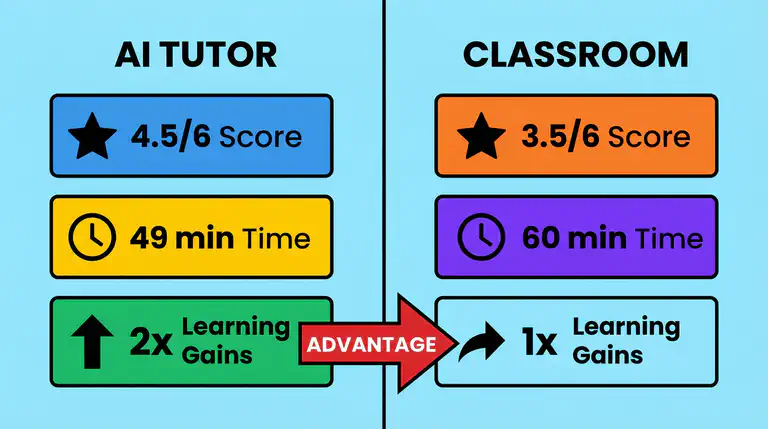

The Harvard team tested 194 university students across two weeks. Every student tried both approaches: learning physics in a hands-on classroom with group work and instructor support, and learning the same material at home with a custom-built AI tutor.

On a test afterwards, students scored about 30% higher after using the AI tutor. The researchers confirmed this gap was statistically reliable, not a fluke.

What makes this striking is that the classroom was not a boring lecture. It was an active, well-run class with expert guidance, already one of the most effective teaching methods we know. The AI tutor beat it anyway, delivering gains close to what researchers have historically only seen with one-on-one human tutoring (Bloom, 1984; Nickow, Oreopoulos, & Quan, 2020).

What this study does not tell us

Here is where I want to be careful, because the headlines write themselves and the reality is more complicated.

This was a study of Harvard undergraduates learning physics, not five-year-olds learning to read. The sample was 194 students, and the AI tutor was custom-built with carefully designed prompts, a very different experience from the apps most families download. We do not yet know if the same results hold for younger children, different subjects, or children who struggle with learning.

The study is valuable. But it would be misleading to treat it as proof that any AI tutor will work for any child.

What made this AI tutor different

Here is something that did surprise me: it was not the AI technology itself that drove the results. It was the design.

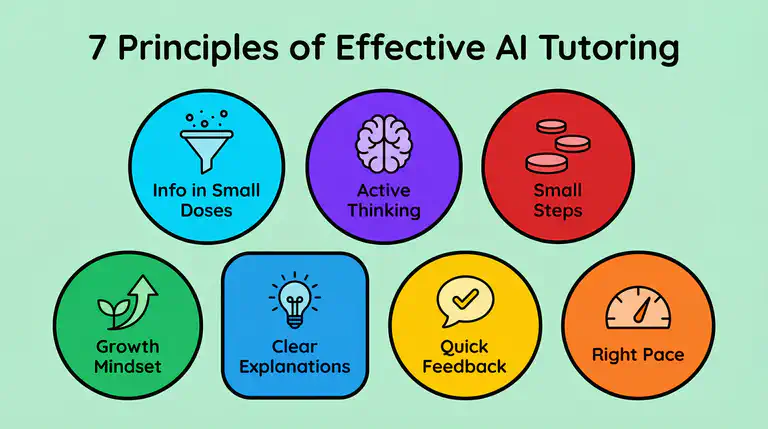

The researchers built their tutor around seven teaching principles: keeping students actively thinking, controlling how much new information appears at once, encouraging a growth mindset, breaking content into small steps, giving accurate explanations, providing feedback right when needed, and letting each student move at their own speed.

Generic chatbots do none of this. Jose et al. (2025) in Frontiers in Psychology found that students using unstructured AI tools got through 48% more problems but scored 17% lower on understanding. The AI did the thinking for them. Without careful design, AI for learning can actually make things worse.

What this means for your child

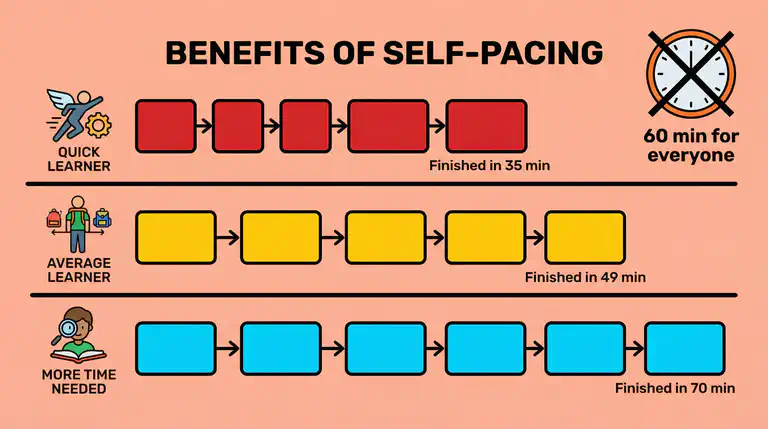

The most practical finding is about pacing. Students who felt class moved too fast spent longer with the AI tutor, giving themselves extra time. Students who felt class was too slow finished faster. The AI naturally adapted to each learner.

I see this same need in the data from thousands of children using Bookbot: children progress at wildly different rates. A child who needs extra time on letter-sound connections should not be rushed, and one who has mastered blending should not be held back. Personalised learning AI makes this possible.

A recent review by Létourneau et al. (2025) in npj Science of Learning looked across 28 studies involving nearly 4,600 children and found a consistent pattern: AI tutoring systems outperformed traditional teaching when they combined personalised pacing, immediate feedback, and step-by-step guidance. The evidence is building, but we still need more research with younger learners and in real-world home settings.

Practical strategies for parents

-

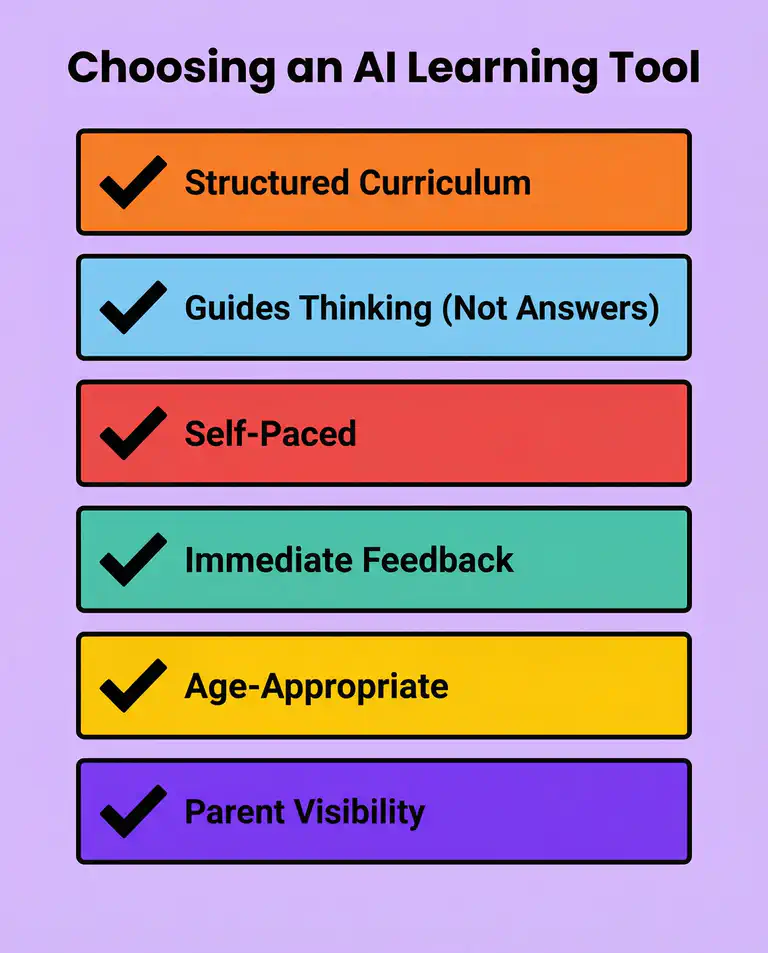

Look for structure, not just AI. This study shows that how an AI tutor is designed matters more than the technology behind it. Choose tools that guide your child through a learning sequence rather than letting them ask random questions.

-

Use AI as a complement, not a replacement. Whether it is AI homework help or reading practice, these tools work best alongside teachers and parents. The researchers recommend using AI tutoring to build foundational knowledge so classroom time can focus on collaboration and deeper thinking.

-

Watch for cognitive offloading. If your child is getting answers without effort, the tool is not teaching. Effective AI teaching should feel like a patient tutor asking questions, not a search engine delivering answers. This is why we built Bookbot to listen to children read aloud: the child does the work, and the AI supports only when needed.

-

Be a healthy sceptic. Not every app that calls itself an “AI tutor” has the careful design that made the Harvard study work. Look for tools built on structured curricula with evidence behind them, not just chatbot wrappers.

The road ahead

This Harvard study is one of the strongest pieces of evidence that AI tutoring, when designed thoughtfully, can deliver impressive learning gains. But it is a starting point, not the final word. The biggest open question is whether these results hold for younger children learning foundational skills like reading, in the messy reality of everyday family life.

That is exactly what we are working to find out. Through Bookbot’s research collaboration with Flinders University, my PhD focuses on understanding how AI-powered reading tools can genuinely help real children, in real homes, become confident readers. It is work we believe every child deserves the benefit of.

If this excites you as much as it excites me, try Bookbot with your child. Every family that joins brings us one step closer to ensuring no child misses out on the kind of personalised reading support that can change their future.

References

Bloom, B. S. (1984). The 2 sigma problem: The search for methods of group instruction as effective as one-to-one tutoring. Educational Researcher, 13(6), 4-16. https://doi.org/10.3102/0013189X013006004

Jose, B., Cherian, J., Verghis, A. M., Varghise, S. M., Mumthas, S., & Joseph, S. (2025). The cognitive paradox of AI in education: Between enhancement and erosion. Frontiers in Psychology, 16. https://doi.org/10.3389/fpsyg.2025.1550621

Kestin, G., Miller, K., Klales, A., Milbourne, T., & Ponti, G. (2025). AI tutoring outperforms in-class active learning: An RCT introducing a novel research-based design in an authentic educational setting. Scientific Reports, 15, 17458. https://doi.org/10.1038/s41598-025-97652-6

Létourneau, A., Martineau, M. D., Charland, P., Karran, J. A., Boasen, J., & Léger, P. M. (2025). A systematic review of AI-driven intelligent tutoring systems (ITS) in K-12 education. npj Science of Learning, 10. https://doi.org/10.1038/s41539-025-00320-7

Nickow, A., Oreopoulos, P., & Quan, V. (2020). The impressive effects of tutoring on PreK-12 learning: A systematic review and meta-analysis of the experimental evidence (EdWorkingPaper No. 20-267). Annenberg Institute at Brown University. https://doi.org/10.26300/eh0c-pc52